Maller’s Optimization to Reduce Proof Size

In the paper, they make use of an optimization from Mary Maller in order to reduce the proof size.

Explanation

Maller’s optimization is used in the “polynomial dance” between the prover and the verifier to reduce the number of openings the prover send.

Recall that the polynomial dance is the process where the verifier and the prover form polynomials together so that:

- the prover doesn’t leak anything important to the verifier

- the verifier doesn’t give the prover too much freedom

In the dance, the prover can additionally perform some steps that will keep the same properties but with reduced communication.

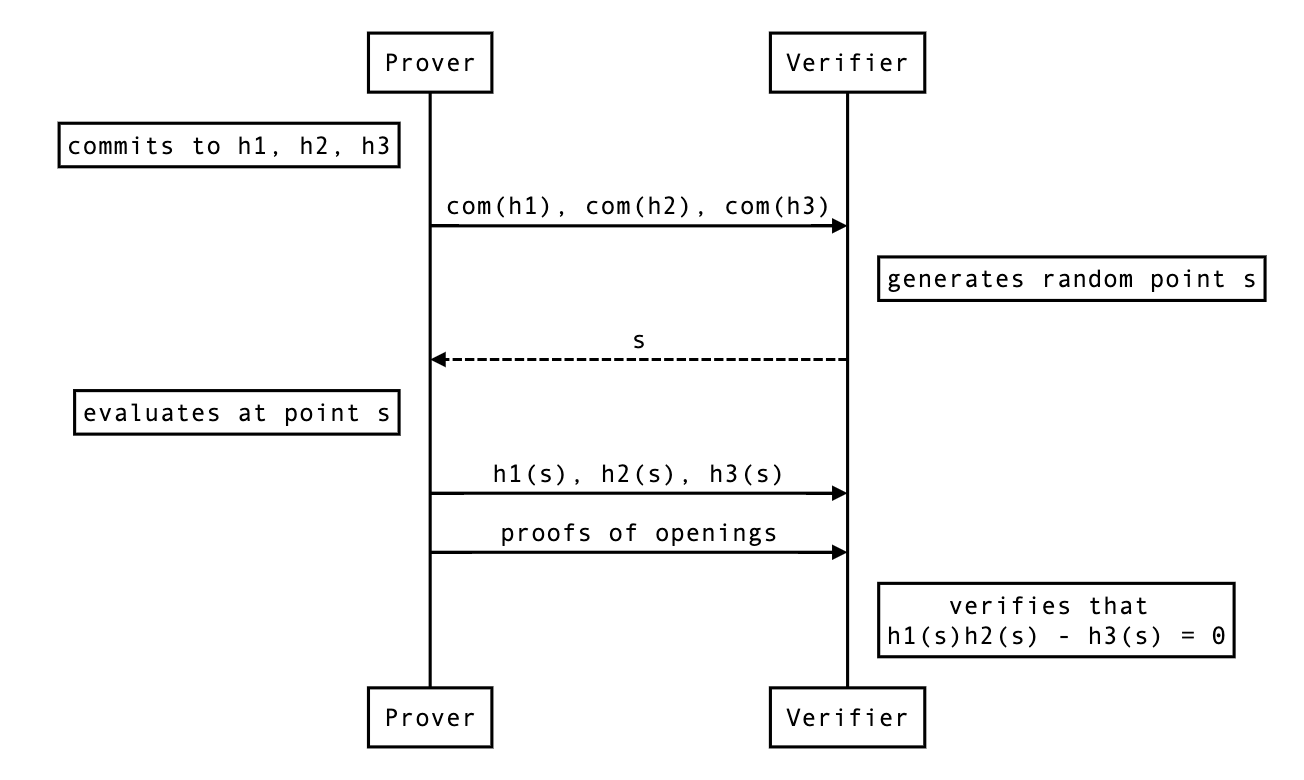

Let’s see the protocol where Prover wants to prove to Verifier that

given commitments of ,

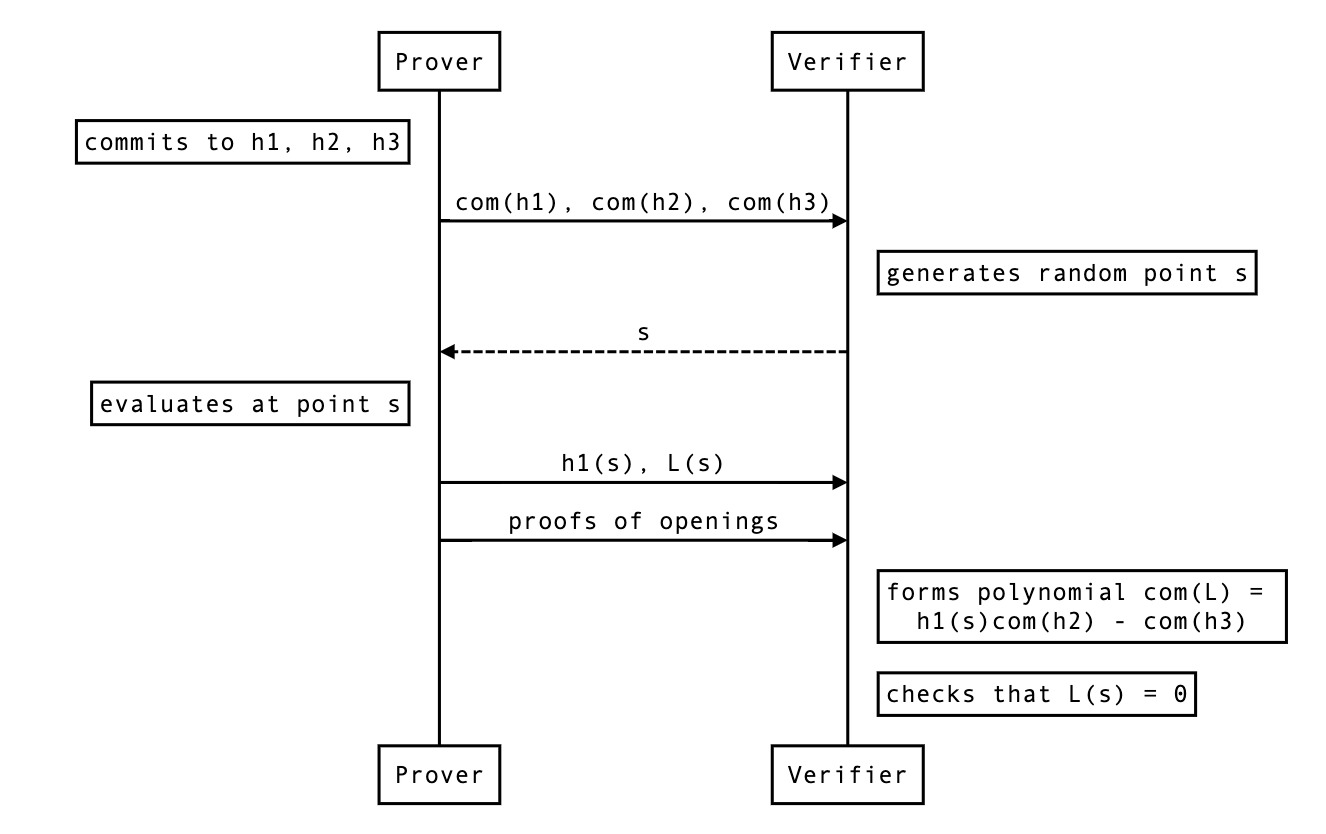

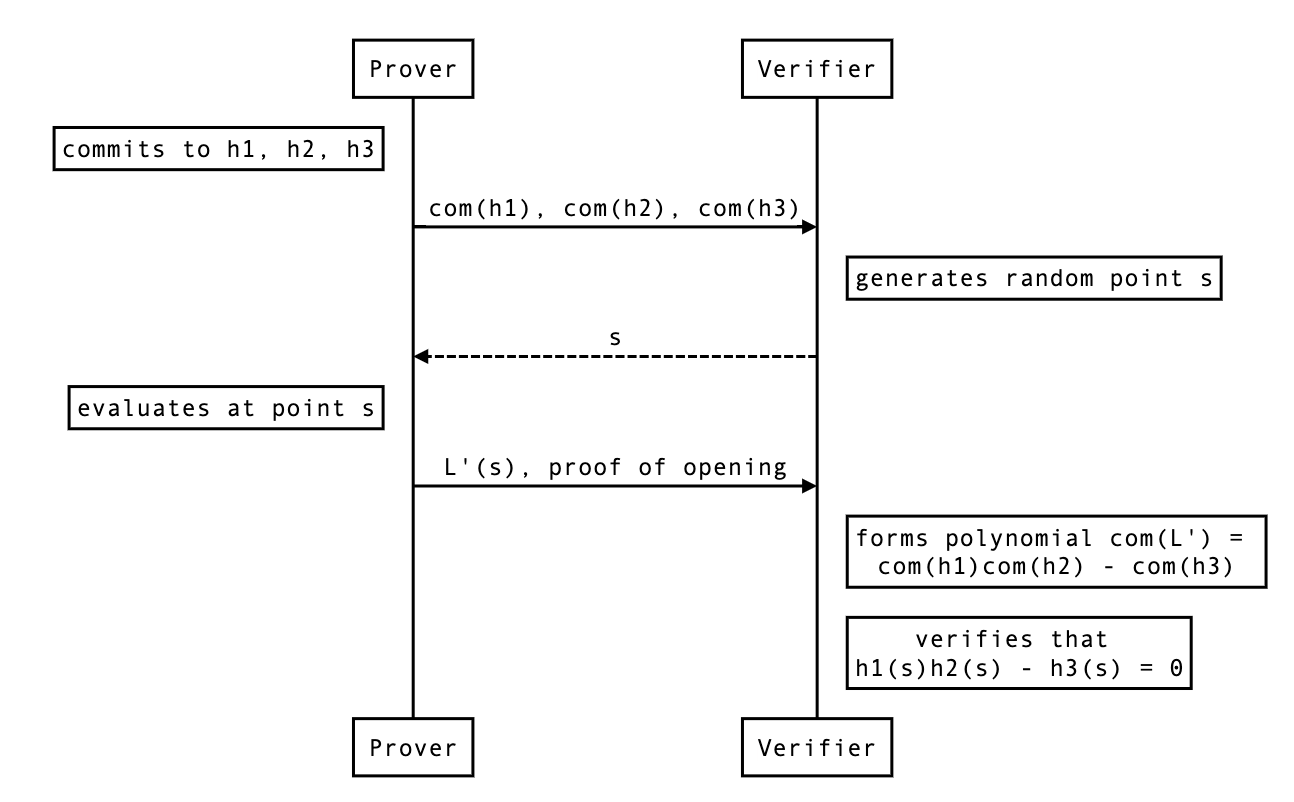

A shorter proof exists. Essentially, if the verifier already has the opening h1(s), they can reduce the problem to showing that

given commitments of and evaluation of at a point .

Notes

Why couldn’t the prover open the polynomial directly?

By doing

The problem here is that you can’t multiply the commitments together without using a pairing (if you’re using a pairing-based polynomial commitment scheme), and you can only use that pairing once in the protocol.

If you’re using an inner-product-based commitment, you can’t even multiply commitments.

question: where does the multiplication of commitment occurs in the pairing-based protocol of ? And how come we can use bootleproof if we need that multiplication of commitment?

Appendix: Original explanation from the paper

https://eprint.iacr.org/2019/953.pdf

For completion, the lemma 4.7: